Drop it in.

We'll remember.

Your memory. The machines handle it. You oversee.

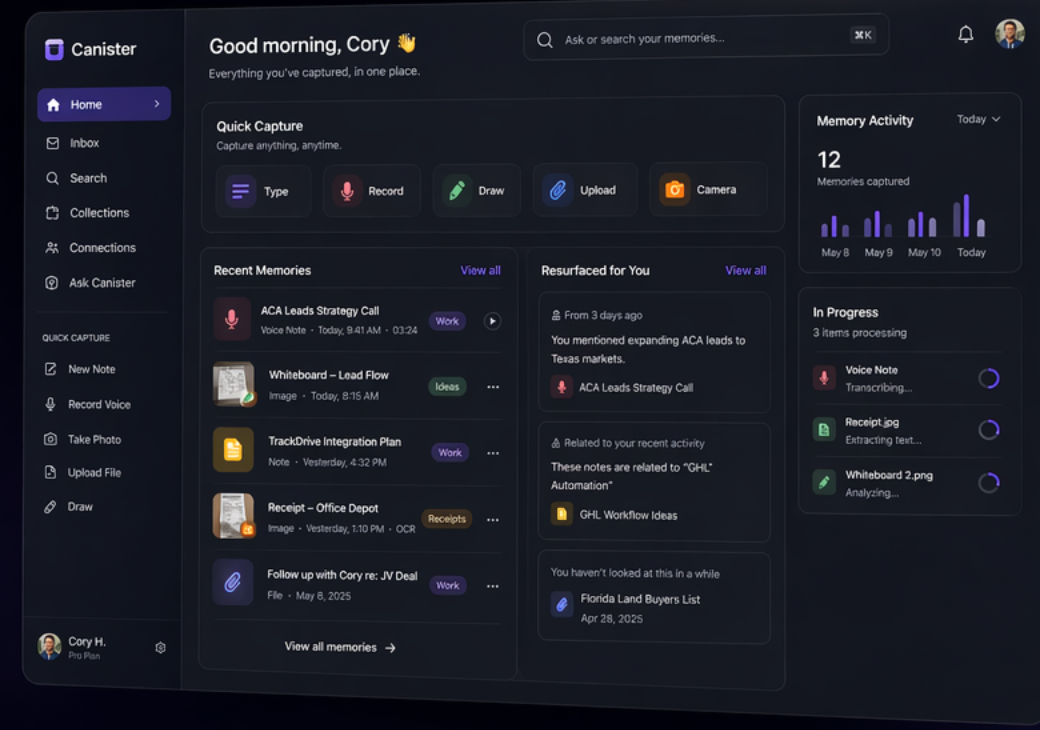

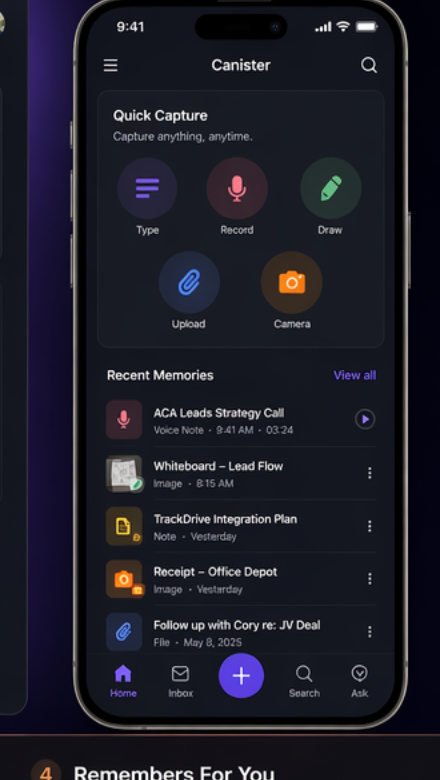

Every conversation across every app. Every idea you've ever had. Every AI session you've ever run. Kanister captures it all, connects the dots, and makes it instantly findable. No folders. No tagging. No effort. Just ask.

Never lose another idea, conversation, or context again.

Find anything in half a second

"What did Sarah say about the apartment?" — instant answer with the exact conversation, date, and source. Across every app, every channel, all at once. Natural language, no search operators needed. Under 500ms, every time.

Zero-effort capture

Connect Telegram, Gmail, Slack, WhatsApp once. Messages flow in forever. Drop voice notes, screenshots, PDFs, random thoughts into the capture bar. Kanister reads, tags, and connects everything automatically. You never organize a thing.

AI that connects the dots

Kanister sees the patterns you miss. It links people across conversations, surfaces commitments you forgot, flags contradictions between what someone said Monday versus Thursday, and extracts follow-ups before they fall through the cracks.

Your AI agents share one brain

Run three Claude terminals and a Cursor instance? They all talk to Kanister — and now through Agent Telepathy, they talk to each other. Shared context, coordinated work, zero conflicts. Your agents operate as a team.

Your data stays yours

Self-hosted on your machine. No cloud dependencies for core features. Your conversations, your thoughts, your AI sessions — they live on your hardware. Always.

Works with every tool you use

12 MCP tools for any AI agent. 55+ REST API endpoints for any integration. Webhooks for n8n, Zapier, custom scripts. If your tools can send data, Kanister consumes it. If your agents need memory, Kanister delivers it.

Your AI agents just got a shared brain.

You run Claude terminals. Cursor. Custom bots. They all talk to Kanister. Now they talk to each other through it. Presence, broadcasts, shared context, coordinated work — your agents operate as a team. You oversee.

$ mb status

● claude-main active DB migration layer

● claude-tests active Frontend test suite

● cursor-refactor active API endpoint cleanup

⟩ 3 agents, 0 conflicts, 12 tasks done today

Recent broadcasts:

→ schema.users: added "plan" column

→ /api/billing: endpoint deprecated

→ context handed off: claude-main → cursor-refactor

Signals: none active

Status: ALL CLEARSession Presence

Every agent registers a heartbeat. Who's alive, what they're focused on, when they last checked in. A standup that happens automatically.

Live Broadcasts

Agent reshapes the project? Broadcasts it instantly. Everyone's in the loop on the next turn. Pub/sub for your AI workforce.

Shared Working Memory

Each agent writes what it's doing, what it decided, and what's pending. The next session picks up exactly where the last one left off.

Intent Signals

Before touching critical files, agents signal what they're about to change. Others work around it. Crashes auto-expire. No deadlocks.

Agent CLI

mb who — who's working. mb claim task-42 — lock it. mb status — the full picture. You oversee. The machines work.

The Platform

12 MCP tools. Decisions API with semantic search. PM sync for Linear + GitHub. Context assembly from Postgres + knowledge graph. Not a feature — the platform your agents run on.

Your agents are ready to work as a team. Give them the brain.

Join the BetaHere's what's under the hood.

API-first, self-hosted, standards-based. No vendor lock-in.

55+ REST Endpoints

Full CRUD for memories, entities, sources, and ingestion. Hybrid search (vector + BM25 + graph). Timeline, analytics, and health monitoring. Agent coordination via /api/coordination/*. JSON responses, consistent error format, API key auth.

GET /api/search?q="what did Sarah say"

POST /api/capture { content, source, metadata }

GET /api/stats

POST /api/coordination/sessions

POST /api/coordination/broadcasts

GET /api/coordination/signals?target=path12 MCP Tools

Structured tools for search, capture, entity management, coordination, and context delivery. Any MCP-speaking agent gets full memory access — Claude Code, Cursor, custom bots, n8n workflows.

mcp://kanister_search

mcp://kanister_capture

mcp://kanister_entity_lookup

mcp://telepathy_register

mcp://telepathy_broadcast

mcp://telepathy_checkTask Management + Decisions

Task API with claim/release and distributed locks. Decisions API — log every architectural choice with semantic search. PM sync adapters for Linear and GitHub Issues. Context assembly from Postgres + knowledge graph in a single query.

POST /api/tasks { title, priority }

POST /api/tasks/claim { task_id, agent_id }

POST /api/decisions { topic, decision, rationale }

GET /api/decisions/search?q="database choice"Open Architecture

FastAPI backend. PostgreSQL 16 + pgvector for hybrid search. Redis 7 event bus. Memgraph knowledge graph. Qdrant vector store. Docker Compose — 10 containers, ~14.5 GB RAM. Runs on a Mac Mini.

The machines handle memory.

You oversee.

Join the beta and get a memory system that actually works — for you and your AI agents. Free while in beta.

- Connect Telegram, Gmail, and more in 2 minutes

- Search everything with natural language

- Your AI agents share one brain — Agent Telepathy

- 12 MCP tools, 55+ API endpoints, full Agent OS

- Your data stays on your hardware